Where Stories

Come Alive

Click highlighted characters and objects. Tap the background to discover hidden details.

Figures breathe, sway, and glow as the scene comes alive. Or watch the whole book play as a cinematic movie.

AI generates new scenes, narration, and images — in real time.

Free to explore. No signup required.

Two ways to experience every story

Click into characters and discover hidden details, or watch the whole book play as a cinematic film. One engine, two cuts — same scenes, same Sarvam narration.

How It Works

A scene appears

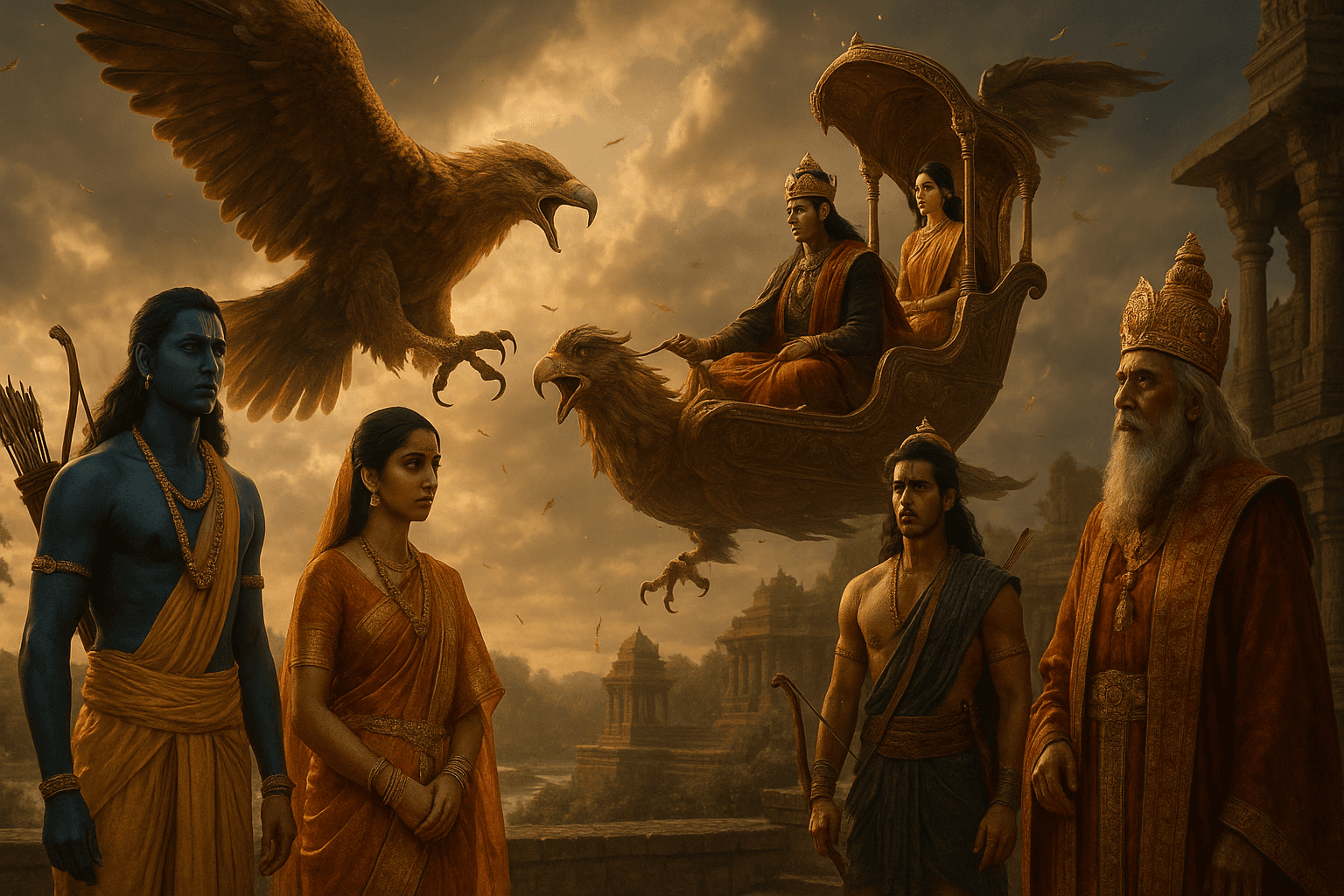

Hand-painted illustration breathes with parallax + drifting fog, with a procedural mood bed underneath and emotional Sarvam narration shaped to the scene’s mood.

Click highlighted elements

Characters and objects with golden glow rings respond instantly. Tap anywhere else and AI checks if there’s a hidden detail worth surfacing.

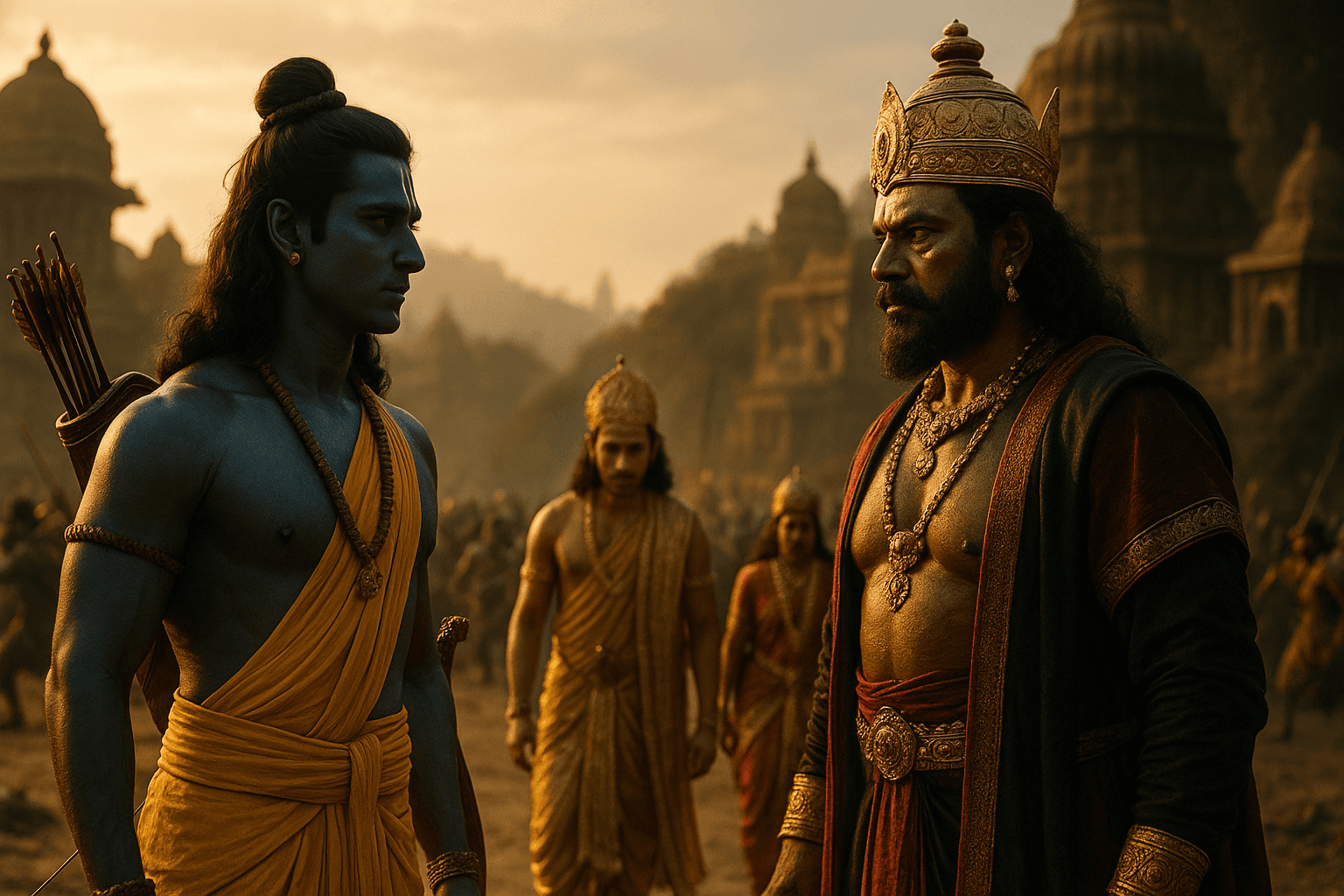

Pick a verb — the world reacts

Camera dollies in for Talk, pushes + shakes for Fight, arcs upward for Leap. The figure quickens its breath, leans toward the addressee, and a verb-keyed sprite flashes — then a branch unfolds, action-keyed so Talk and Fight stay distinct.

Or watch it as a movie

Same engine renders a cinematic cut: per-scene camera motion, sentence cues, mood music ducked under narration, the same effects DSL baked into the file. Plus a 45-second trailer cut on demand.

Normal Flipbook vs Living Story Engine

- Per-scene motion — battle push, divine glow, slow pan

- Ambient idle — figures breathe, sway, blink, look around

- Puppet states — Talk speeds breath, Fight quickens sway

- Emotional Sarvam narration — pace + pitch shaped per mood

- Sentence cues with explicit ms timing in the manifest

- Procedural mood bed, ducked to 0.10 under speech

- Universal effects DSL — particles, dust shafts, fog, rim light

- Audio-driven mouth pulse + geometric gaze toward addressees

- Verb-aware QA — Talk, Fight, Honor each feel distinct

- Downloadable MP4 export — coming soon

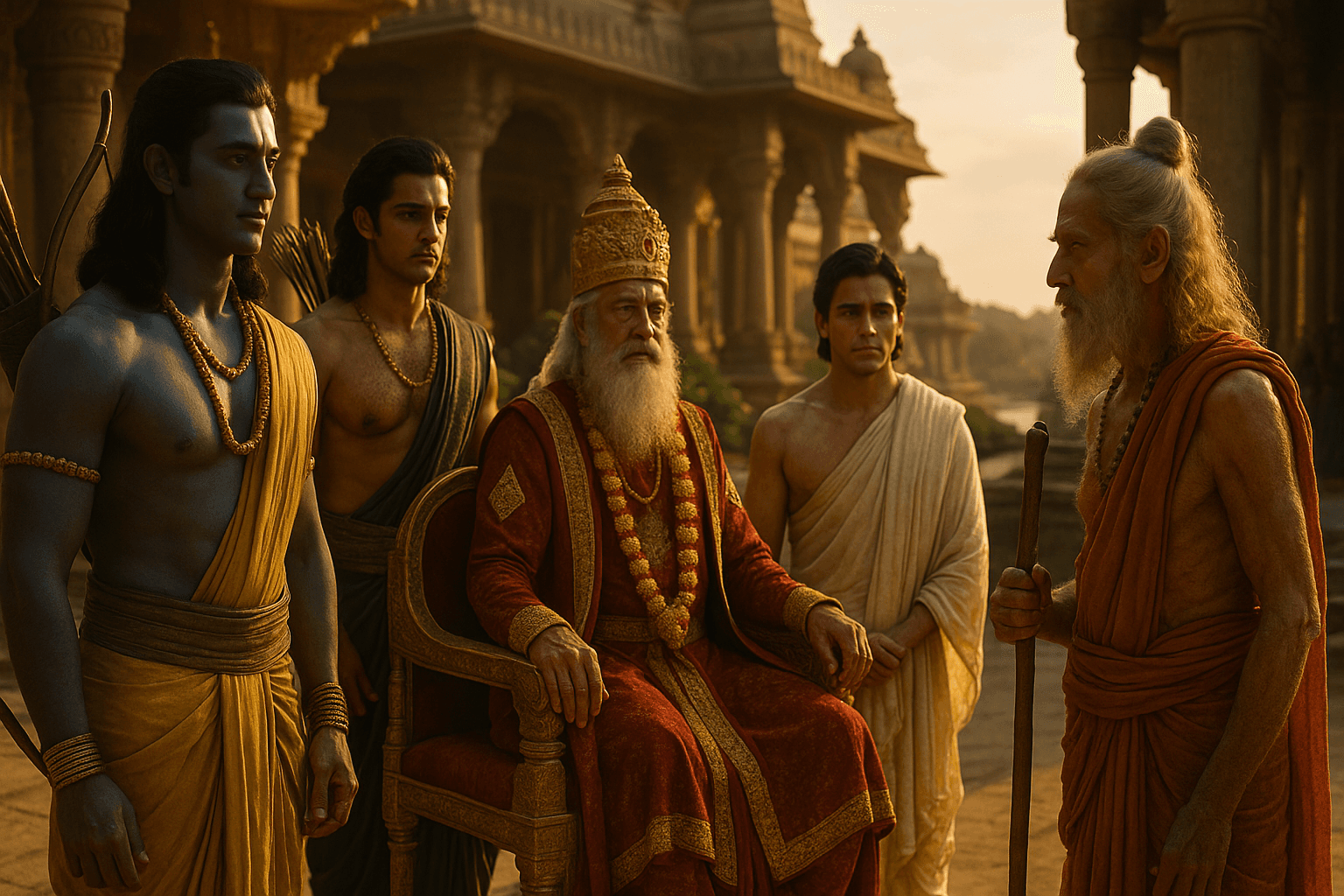

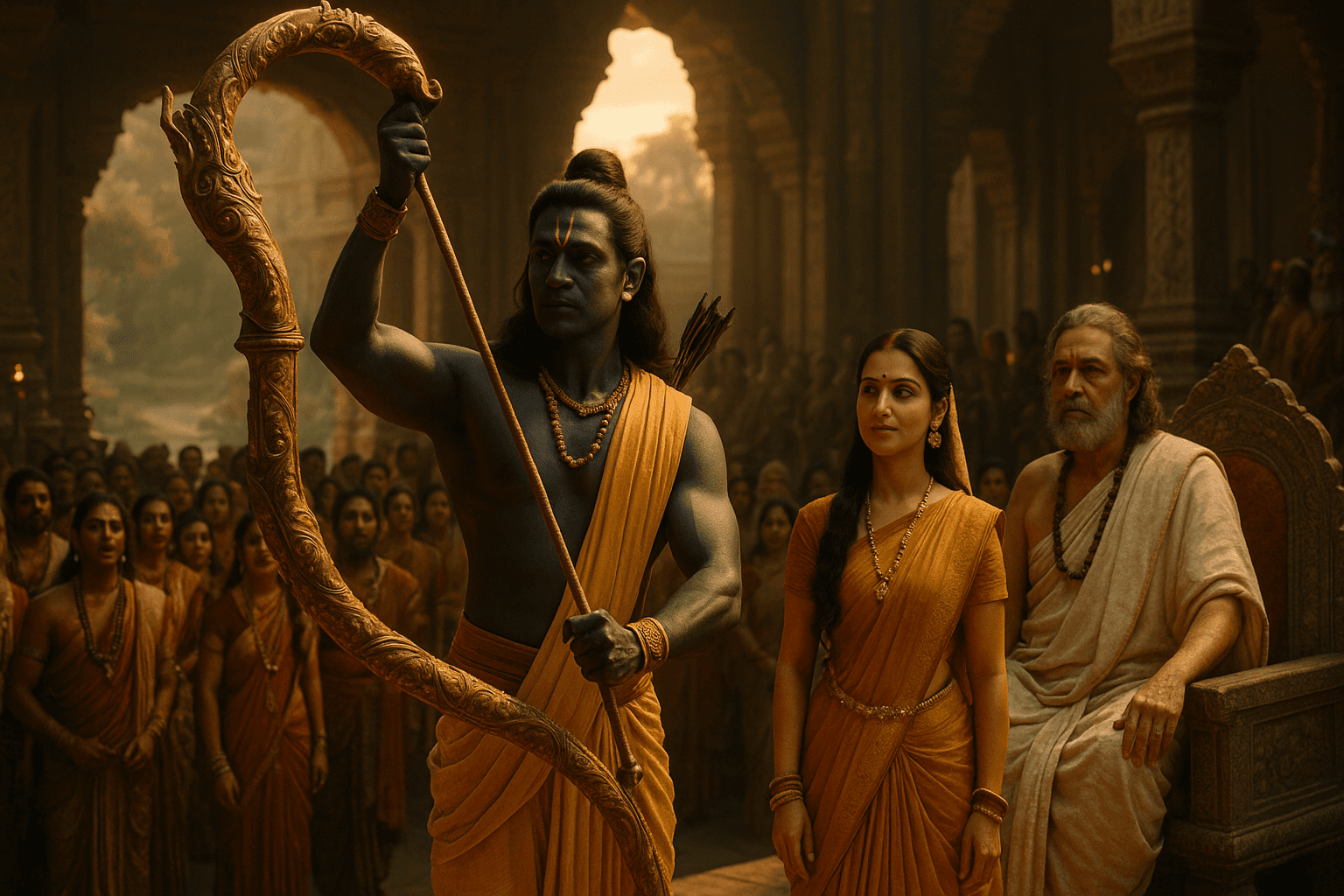

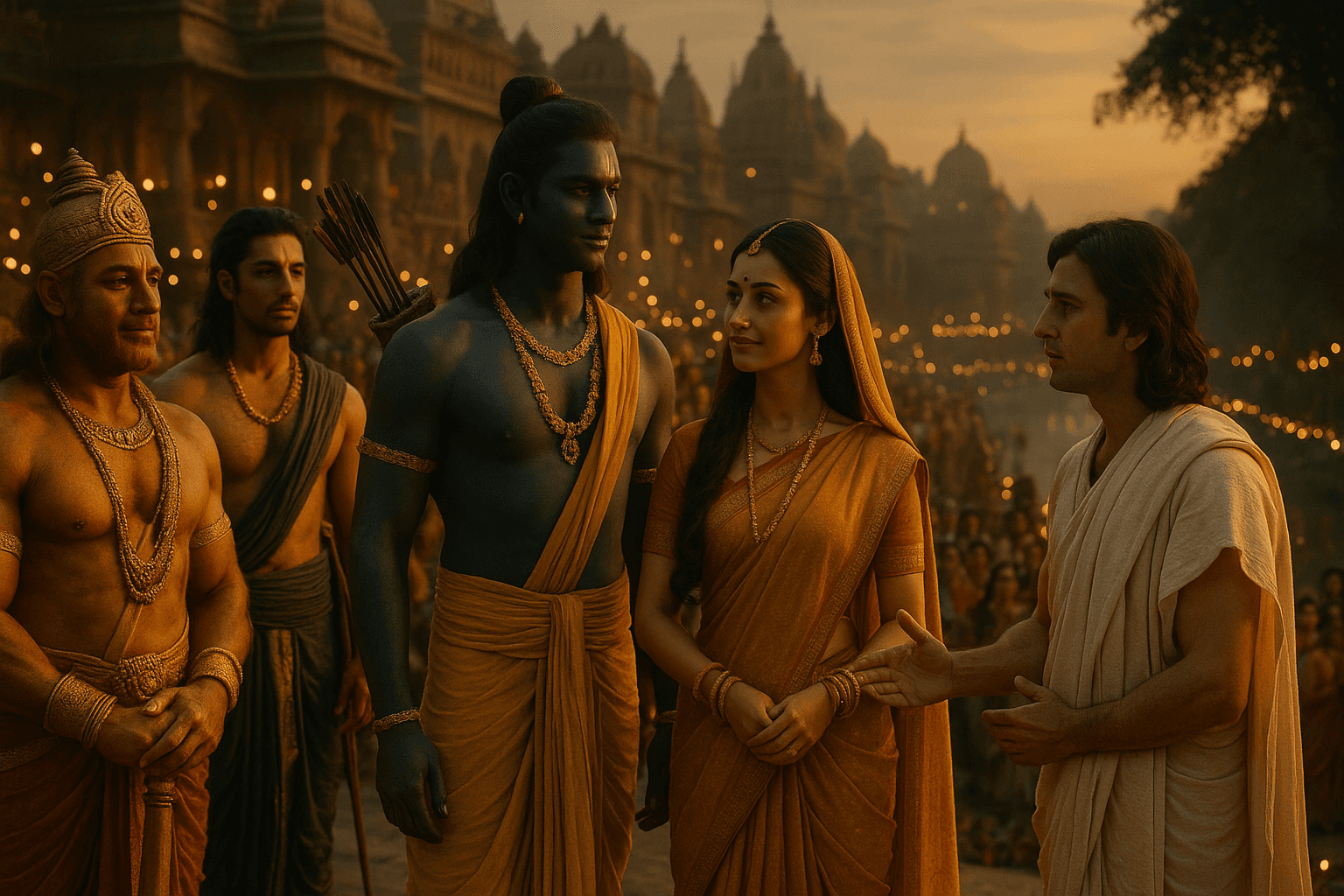

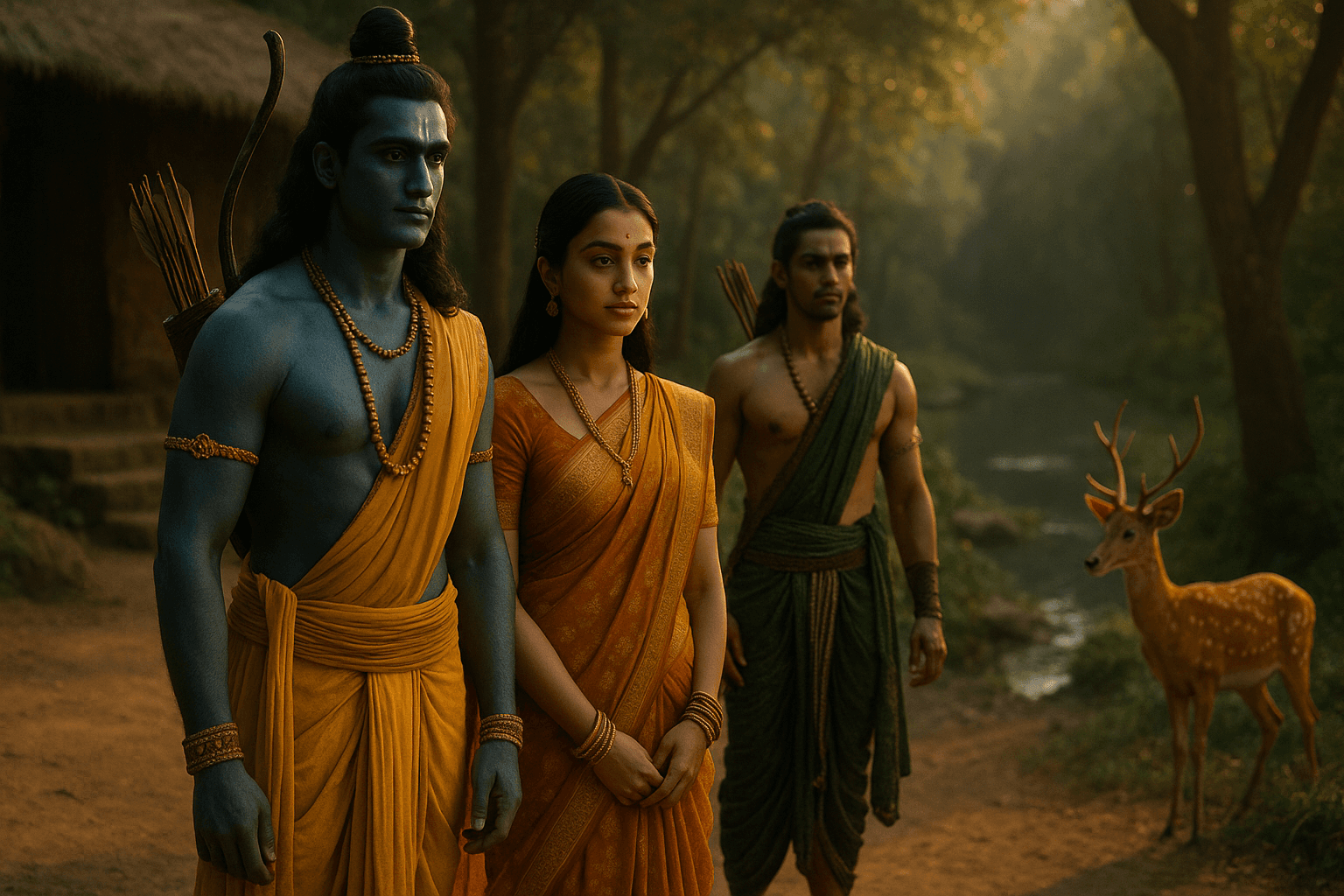

Four visual styles. One consistent cast.

Pick a style at generation time. Whichever you choose, every scene image is anchored to a canonical portrait of each character — Rama looks like the same Rama in scene 1 and scene 12. Comic books even talk: in-frame speech bubbles, narrator captions, shout starbursts — typed in as the narration plays. Style and accuracy are decoupled by design.

Live in the engine right now

One curated, three generated end-to-end by KathaKitaab from a typed title. All four read interactively. All four play as a cinematic cut in the browser.

Make your own in four steps

Same pipeline that produced Akbar and Birbal above. Each step links straight to the part of the engine that handles it — read top to bottom and you'll have a movie of your own in about three minutes.

Type any book title

Mahabharata. Panchatantra. NCERT Science Grade 6. Tenali Raman. The AI plans a 9–12 scene story arc — establish, raise the conflict, follow the rising action, turn, resolve.

Pick a visual style — engine builds it

Photoreal Bollywood cinematic, storybook watercolour, or cinematic animation. gpt-4o-mini writes scene narration, hotspots, and a quiz. gpt-image-1 first bakes one canonical portrait per character, then uses it as an anchor so the same Rama, Sita, or Birbal shows up in every scene. Sarvam Bulbul records the narration in a voice the AI picked to match each character.

Click anywhere

Highlighted characters and objects speak in their own voice — the LLM picks the archetype at gen time. Tap empty background and the AI checks what hidden detail belongs there. Every click is cached so repeats are instant.

Watch as a movie

Same scenes, cinematic cut. Per-scene camera motion, ducked mood music, sentence-timed captions, particles + dust shafts + divine glow per the manifest. Ramayana ships pre-baked; AI books are synthesised live from the same engine.

One Engine. Any Story.

Type any title. The AI builds a complete interactive illustrated book.

Ready to enter a living story?

Click a character. Discover a hidden path. Hear the story narrated. Shape what happens next.